SaaS with Private Runner Installation

This guide walks through connecting the RunWhen SaaS platform to your Kubernetes cluster using a private runner. The platform handles the UI, AI assistants, and coordination; the runner executes tasks inside your network so your data stays local.

Overview

| Step | What | Where | Time |

|---|---|---|---|

| 1 | Create account & workspace | RunWhen Platform (browser) | 5 min |

| 2 | Deploy the Helm chart | Your Kubernetes cluster | 10 min |

| 3 | Verify discovery & connectivity | Both | 5 min |

| 4 | Start using the platform | RunWhen Platform | — |

Step 1: Create Your Workspace

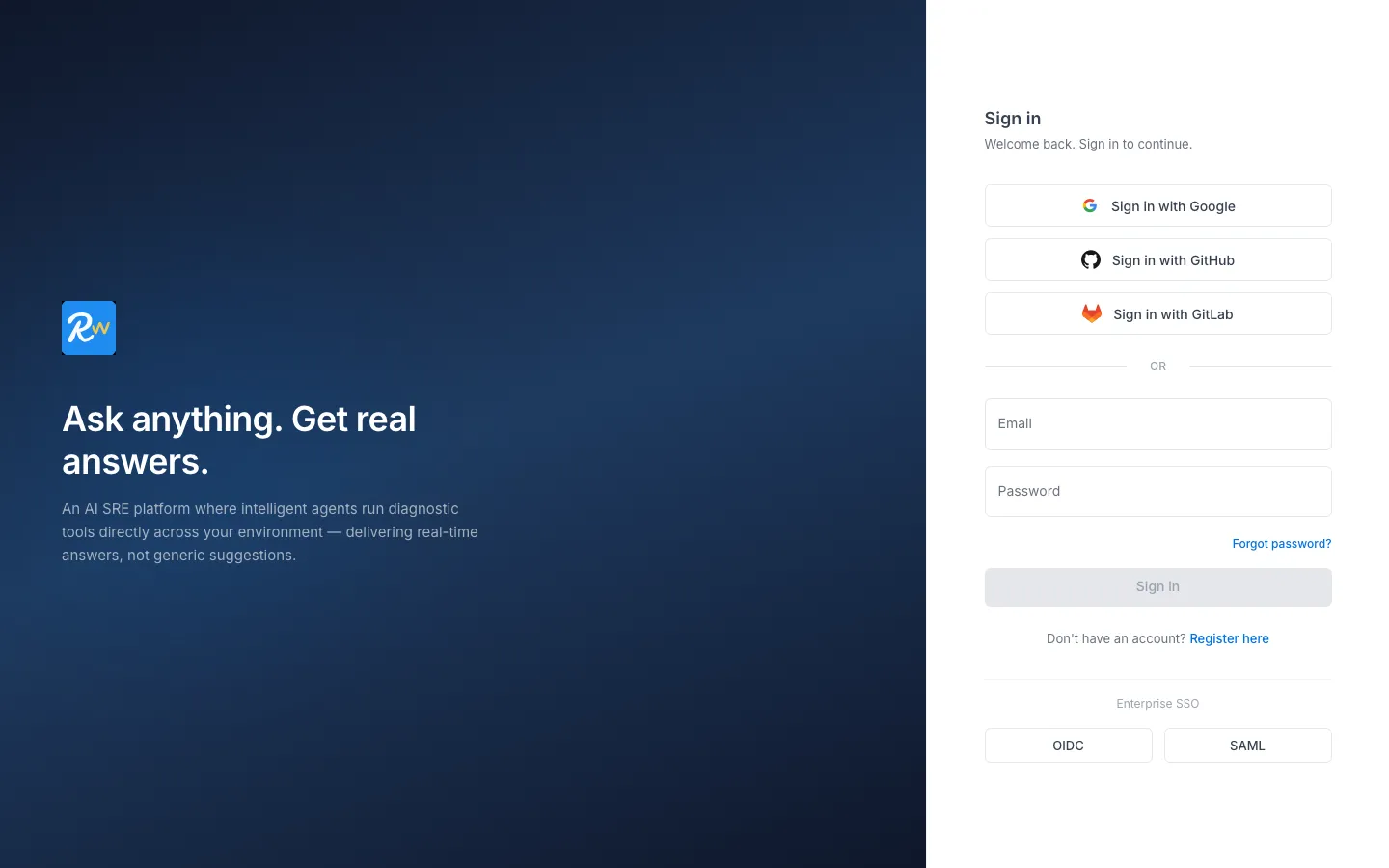

- Go to app.beta.runwhen.com and sign in (or create an account with Google, GitHub, GitLab, or email/password).

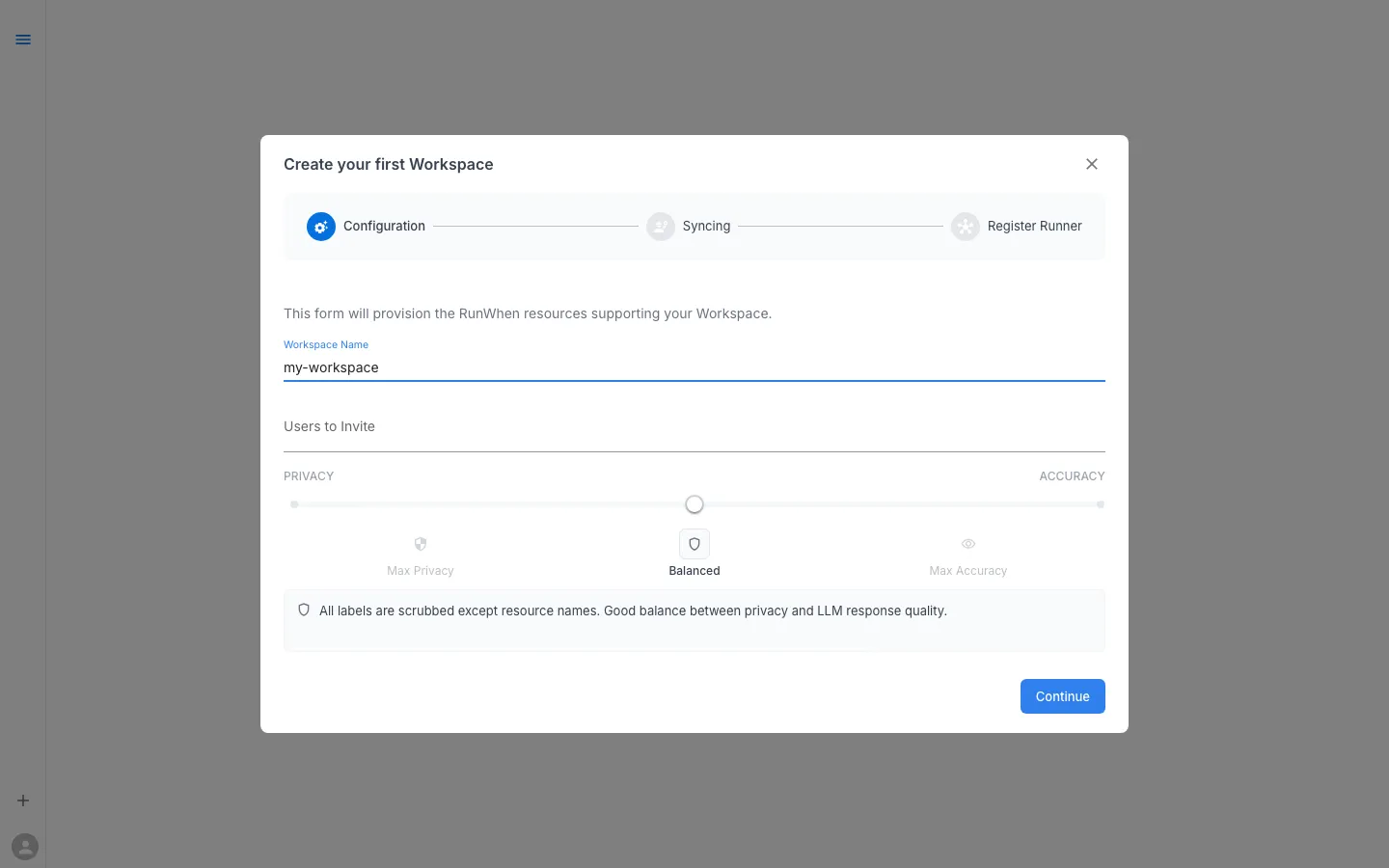

- If this is your first workspace you will be taken directly to the Create your first Workspace wizard. Otherwise, click the + button in the bottom-left corner.

-

Enter a workspace name (e.g.

my-workspace). -

Optionally invite team members and adjust the Privacy / Accuracy slider (Balanced is a good default).

-

Click Continue. The wizard walks through three steps:

- Configuration — workspace name, invites, and privacy settings (this screen)

- Syncing — the platform provisions backend resources for your workspace (takes 1–2 minutes)

- Register Runner — provides the

helm installcommand pre-filled with your workspace token. Copy this command for the next step.

-

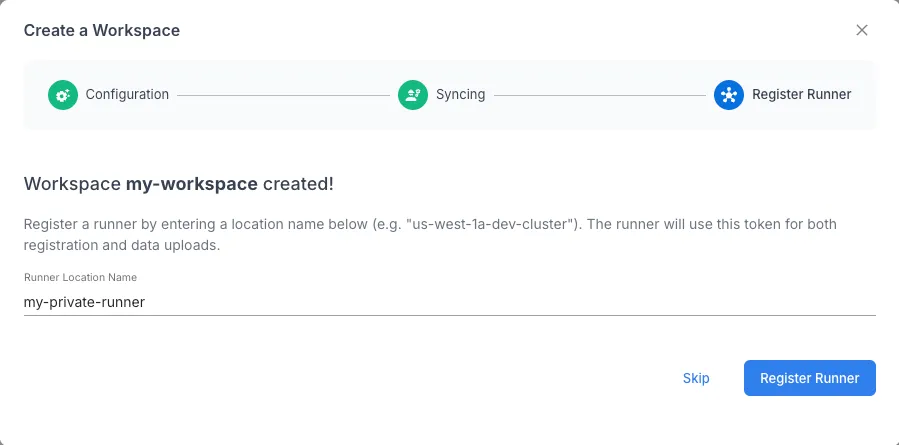

Provide a name for your private runner and Register Runner

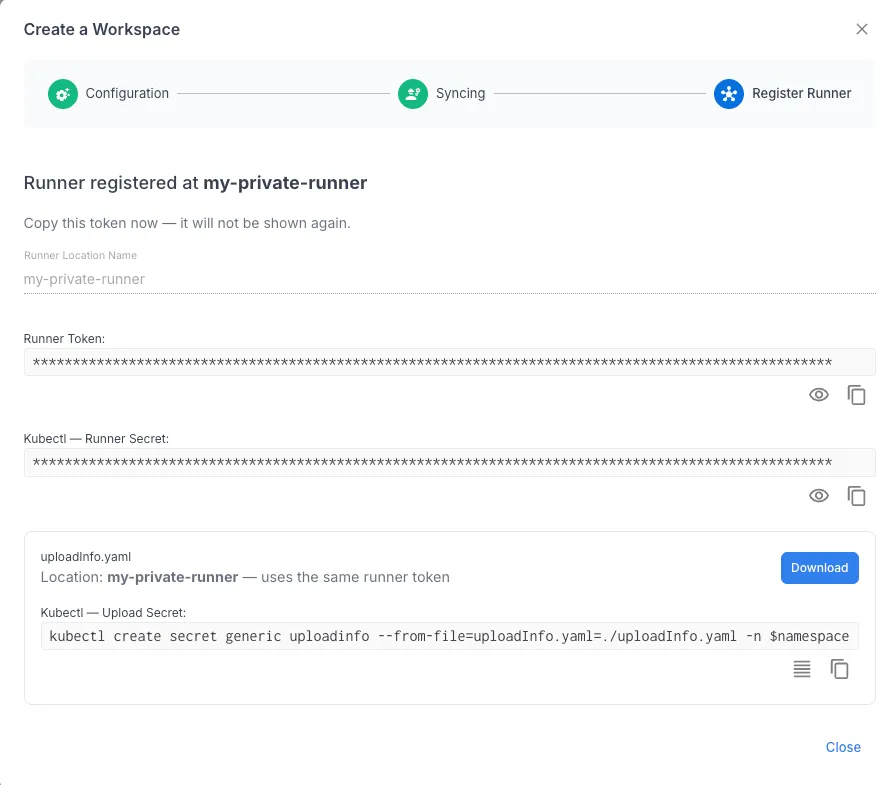

- Copy the Runner Token and Download the uploadinfo.yaml file. Add these to the namespace created in Step 2.

Network & Registry Requirements

All RunWhen Local components communicate outbound only — no inbound ports need to be opened. The following URLs must be reachable from the cluster over HTTPS (port 443).

Platform & Service Endpoints

| URL | Purpose | Used By |

|---|---|---|

papi.beta.runwhen.com | Platform API calls | Runner, worker pods |

runner.beta.runwhen.com | Runner control signals | Runner |

runner-cortex-tenant.beta.runwhen.com | Metrics from workers and health-check pods | OpenTelemetry collector |

vault.beta.runwhen.com | Secure secret storage and retrieval | Runner, worker pods |

storage.googleapis.com | Execution log storage (via signed URL) | Worker pods |

Container Registries

| Registry | Images | Used By |

|---|---|---|

us-docker.pkg.dev | Runner, robot-runtime base image (Google Artifact Registry) | Runner, worker pods |

us-west1-docker.pkg.dev | CodeCollection images (built per-collection, per-branch) | Worker pods |

ghcr.io | Workspace builder image (runwhen-local) | Workspace builder |

docker.io | OpenTelemetry Collector image | OTel collector pod |

CodeCollections & Discovery

| Endpoint | Purpose | Used By |

|---|---|---|

github.com | CodeCollection repositories (discovery and automatic configuration) | Workspace builder |

api.cloudquery.io | Discovery plugin installation | Workspace builder |

runwhen-contrib.github.io | Helm chart repository (only from the install machine) | Helm client |

Additional access

If you are discovering cloud resources, the workspace builder and workers also need network access to the relevant Kubernetes, AWS, Azure, or GCP management APIs for any infrastructure included in discovery.

Proxy & private registries

If your cluster is behind a corporate proxy, the Helm chart supports proxy.httpProxy, proxy.httpsProxy, and proxy.noProxy settings. A custom proxy CA can be injected via proxyCA. Container image registries can be overridden with registryOverride for environments using private mirrors. See the values.yaml reference for details.

Step 2: Deploy the Helm Chart

The runwhen-local Helm chart deploys three types of components into your cluster:

- Workspace builder — discovers resources in your cluster and matches them with relevant tasks

- Runner — coordinates task execution and manages worker lifecycle

- Workers — the pods that actually run tasks. The runner creates workers by pairing a CodeCollection (a library of task definitions) with the task runtime. This is also the primary way to scale — increase

workerReplicasper collection as the number of managed resources grows.

2a. Add the Helm Repository

helm repo add runwhen https://runwhen-contrib.github.io/helm-chartshelm repo update2b. Create the Namespace

kubectl create namespace runwhen-local2c. Install

Use the workspace name you chose in Step 1. The workspace creation wizard provides a ready-to-use helm install command with your workspace token pre-filled — you can copy it directly.

Example install command

helm install runwhen-local runwhen/runwhen-local \ --namespace runwhen-local \ --set workspaceName=my-workspace \ --set runner.enabled=trueCustomizing your install

For production installs, create a values.yaml to configure discovery scope, CodeCollections, resource limits, and secrets. See the full values reference on GitHub.

2d. Verify Pods Are Running

kubectl get pods -n runwhen-local

# Expected output:# NAME READY STATUS AGE# runwhen-local-workspace-builder-xxxxxxxxxx-xxxxx 1/1 Running 60s# runwhen-local-runner-xxxxxxxxxx-xxxxx 1/1 Running 60s# rw-cli-codecollection-worker-0 1/1 Running 45s# rw-cli-codecollection-worker-1 1/1 Running 45s# rw-cli-codecollection-worker-2 1/1 Running 45s# rw-cli-codecollection-worker-3 1/1 Running 45sStep 3: Verify Discovery & Connectivity

The workspace builder automatically scans your cluster on startup. Check its logs to confirm discovery completed:

kubectl logs -n runwhen-local -l app.kubernetes.io/name=runwhen-local-workspace-builder --tail=50Then return to the platform:

- Open your workspace at app.beta.runwhen.com

- You should see discovered resources and matched tasks populating your workspace

- Try asking a question in Workspace Chat (e.g. “What is the health of my cluster?”)

Step 4: Start Using the Platform

With discovery complete and the runner connected, you can:

- Ask questions in Workspace Chat and let the assistant investigate

- Browse issues that the platform surfaces from background health checks

- Run tasks on demand from the Workspace Studio

- Set up rules and commands to customize assistant behavior for your environment

- Invite team members under Settings → Users

See the Use section for detailed guides on each of these workflows.

Troubleshooting

| Symptom | Check |

|---|---|

Pods stuck in ImagePullBackOff | Verify your cluster can reach ghcr.io. Check for image pull secrets if using a private registry mirror. |

| Runner can’t connect to platform | Confirm outbound HTTPS on port 443 to runner.beta.runwhen.com. Check proxy settings if behind a corporate firewall. |

| No resources discovered | Check workspace builder logs. Verify the service account has cluster-reader permissions: kubectl auth can-i list pods --all-namespaces --as=system:serviceaccount:runwhen-local:workspace-builder |

| Tasks fail to execute | Check runner pod logs. Ensure secrets referenced in secretsProvided exist in the namespace. |

For enterprise self-hosted deployments, see Self-Hosted Deployment Requirements and contact support@runwhen.com for planning assistance.